The Future is a Data Fabric and Mesh, Woven by AI Agents

Why the Future of Enterprise Data Mesh and Fabric Relies on Autonomous AI Agents and a Universal Semantic Protocol.

Howard Chi

Updated: Jan 09, 2026

Published: Jan 09, 2026

In my last post, I argued that the era of the “Great Centralization” is officially dead. We have moved past the naive belief that we can or should copy every byte of enterprise data into a single, monolithic warehouse. The physics of data gravity, cost, and latency have forced our hand: The future is distributed.

But accepting a distributed reality is only step one. Step two is much harder.

If we leave our data in many different silos spread across PostgreSQL, Snowflake, ClickHouse, and legacy Oracle without a plan, we haven’t built a modern architecture. We’ve built a disjointed silo of information.

For the last decade, we have tried to solve this chaos with architecture diagrams. We drew boxes labeled “Data Fabric” and “Data Mesh.” We held meetings. We wrote manifestos. And for the most part, we failed.

Why? Because we were trying to weave a global tapestry using manual labor.

The future of enterprise data isn’t just about where the data sits. It is about who connects it. And in the next 18 months, that “who” is shifting from human engineers to Autonomous AI Agents.

We are entering the era of the Agentic Data Mesh, a self-healing, self-assembling data fabric woven in real-time by AI.

The “Manual Loom” Problem: Why Fabric and Mesh Stalled and Failed

To understand where we are going, we have to look coldly at why the previous era’s two biggest ideas, Data Fabric and Data Mesh, struggled to gain traction in the real world.

1. The Broken Promise of the Data Fabric

The concept of the Data Fabric was seductive. It promised a unified, intelligent layer that sat on top of your distributed data, automatically integrating it and making it accessible. It was supposed to be the “connective tissue” of the enterprise.

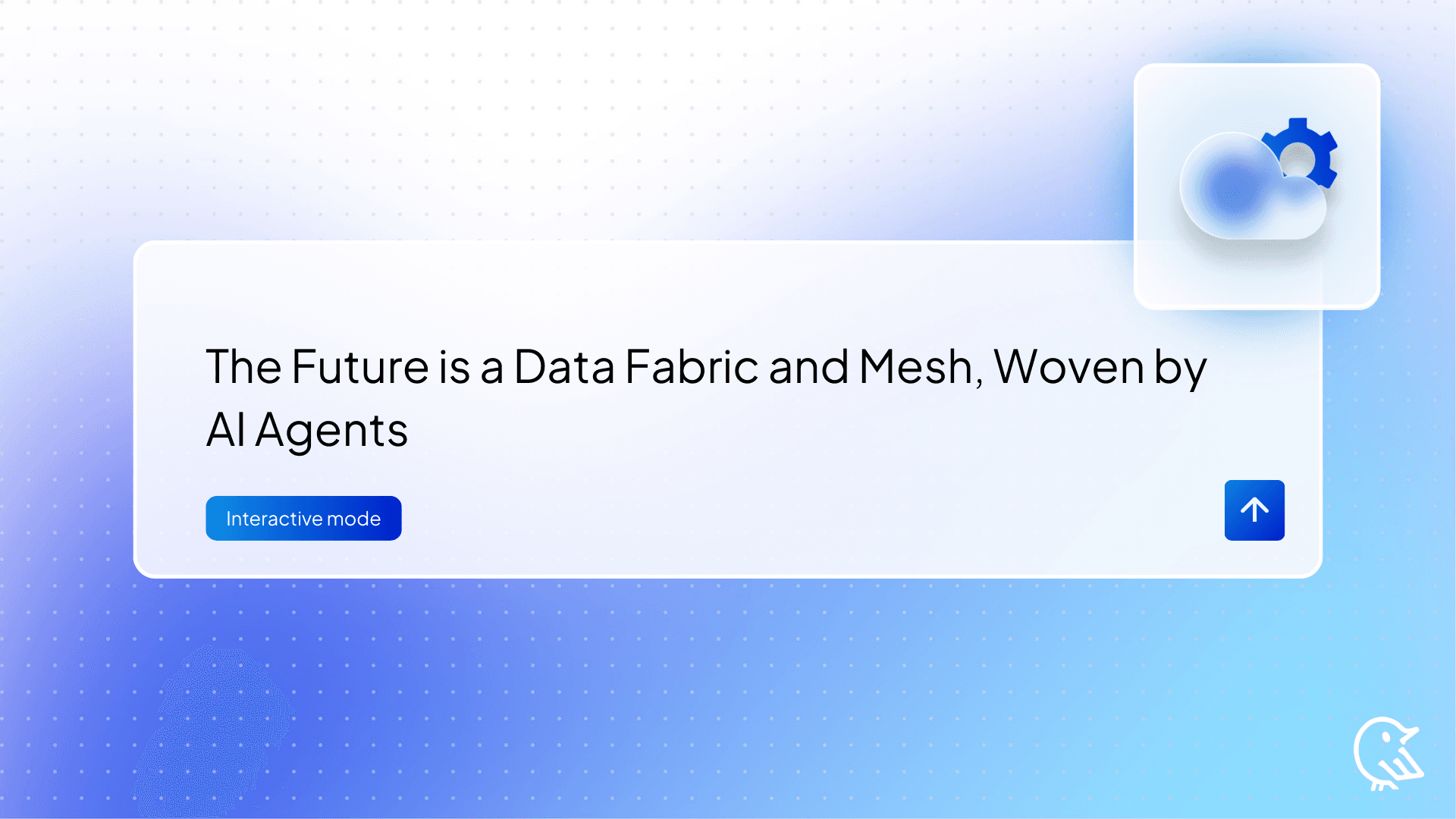

In practice, however, “Data Fabric” became a euphemism for “Active Metadata Management,” which in turn became a euphemism for “Manual Tagging Hell.”

To make a Data Fabric work prior to Generative AI, you needed an army of data stewards to manually define relationships. You had to explicitly tell the system, “Column A in Database is the same as Column B in the ERP.” You had to build rigid ETL pipelines to virtualize these connections.

The software promised automation, but the implementation required human sweat. The moment the schema changed in the source system, the fabric tore. The maintenance burden was so high that most companies reverted to simple point-to-point connections.

2. The Organizational Friction of the Data Mesh

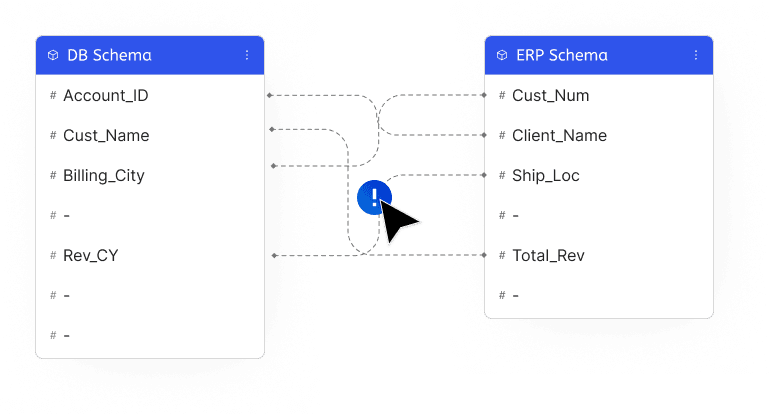

Then came Data Mesh. This was a paradigm shift, not just a technological one. The core principle was “Data as a Product.” It argued that the central data team was a bottleneck, so we should push ownership out to the domains. The Marketing team should own marketing data; the Logistics team should own logistics data.

It was a brilliant philosophy that crashed into organizational reality.

When you went to the VP of Sales and said, “Congratulations, you are now a Data Domain Owner! You need to manage your data products, ensure schema stability, and provide SLAs to the rest of the company,” they looked at you like you were crazy.

They didn’t want to be data engineers.

Data Mesh failed because it imposed a high cognitive load and technical tax on non-technical teams. We asked business users to act like software engineers, and the friction killed the momentum.

This is the pivotal moment. We are transitioning from the “Manual Era” to the “Agentic Era.”

Generative AI and Large Language Models (LLMs) act as the universal translator that was missing from the previous equation. They solve the two fundamental problems that killed Fabric and Mesh: Complexity and Labor.

In the post-AI era, we don’t ask humans to stitch the fabric. We ask Agents to weave it.

Imagine a fleet of autonomous AI agents; let’s call them “Data Stewards” that live on your network. They possess infinite patience, they read documentation instantly, and they understand semantic context better than most junior engineers.

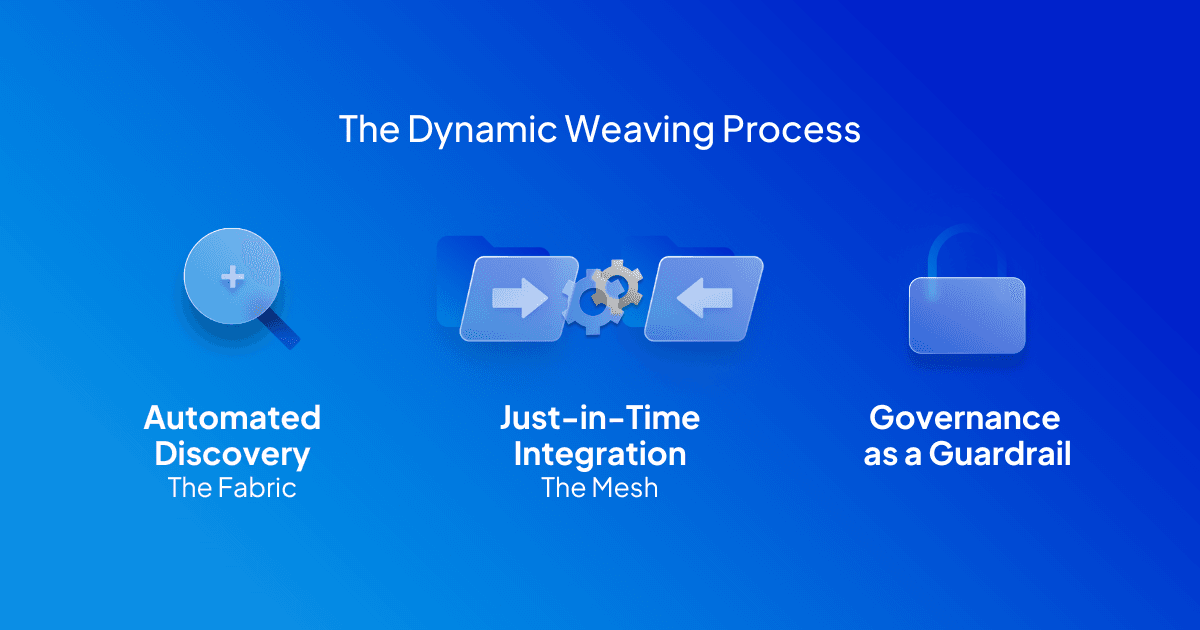

The Dynamic Weaving Process

Here is how the architecture changes:

- Automated Discovery (The Fabric): Instead of a human manually mapping schemas, an Agent scans your new Oracle instance. It reads the column headers, samples the data, and infers, “This looks like ‘Order History’.” It then checks your central semantic definitions and asks, “Should I map this to the global ‘Customer Orders’ entity?” A human clicks “Yes.” The fabric weaves itself.

- Just-in-Time Integration (The Mesh): In the old world, if Finance needed data from Engineering, we built a permanent ETL pipeline. In the new world, an Agent acts as the bridge. When a query comes in that requires data from both domains, the Agent dynamically constructs a federated query, retrieves the data, joins it, answers the question, and then dissolves the connection. We move from “Permanent Pipelines” to “Ephemeral Joins.”

- Governance as a Guardrail: Security in a distributed system is usually a nightmare. But AI Agents can act as real-time governance officers. Before any query is executed, a Governance Agent reviews the SQL against semantic policies. “Does this user have clearance for PII in the EU region?” If the answer is no, the Agent rewrites the query to redact the sensitive columns before it hits the database.

This is the vision of the Self-Healing Mesh. The connections between data sources are no longer rigid pipes that burst under pressure. They are flexible threads, woven and re-woven by intelligence agents in response to the needs of the moment.

The “Last Mile” Problem: Hallucination and Context

However, if you are a seasoned engineer reading this, you are probably skeptical. You are thinking: “Great, you want to let an LLM write SQL against my production database? That sounds like a recipe for disaster.”

And you are right.

If you point GPT-4 or Claude at a raw database schema, you get chaos.

- The AI hallucinates table names.

- It misunderstands how you calculate “Gross Margin” (is it pre-tax, post-tax, or including refunds?).

- It tries to join tables that have no business being joined.

This is the Context Gap. AI Agents are incredible workers, but they are only as good as the instructions (the context) they are given. Without a standardized way to understand the meaning of your business data, an Agent is just a fast way to generate wrong answers.

The Agents provide the labor. But they need a blueprint.

Where Wren AI Comes In: The Pattern for the Weavers

This is precisely where Wren AI fits into the modern data stack. We are not just building another text-to-SQL chatbot. We are building the Semantic Operating System for the Agentic Data Mesh.

To make this future work, you need three layers:

- The Raw Data: Your distributed databases (Snowflake, Postgres, BigQuery).

- The Weaver: The AI Agent (Wren AI or Custom Agents).

- The Pattern: The Semantic Layer.

Wren AI is that pattern. We provide the “Brain” that prevents the Agents from hallucinating. We do this through two critical technical innovations: MDL and MCP.

1. MDL (Modeling Definition Language): The DNA of Your Business

Agents cannot guess your business logic; they must be told. Wren AI uses MDL, a modeling definition language that codifies your business metrics, relationships, and calculations into a format that both humans and AI can understand.

In the Wren ecosystem, you define “Revenue” once in MDL. You define the relationship between “Users” and “Orders” once. When an Agent needs to answer a question, it doesn’t look at the raw, messy database schema. It looks at the MDL. The MDL acts as the “Constitution” for the AI. It ensures that, no matter which agent asks and which database the data resides in, the answer is mathematically consistent.

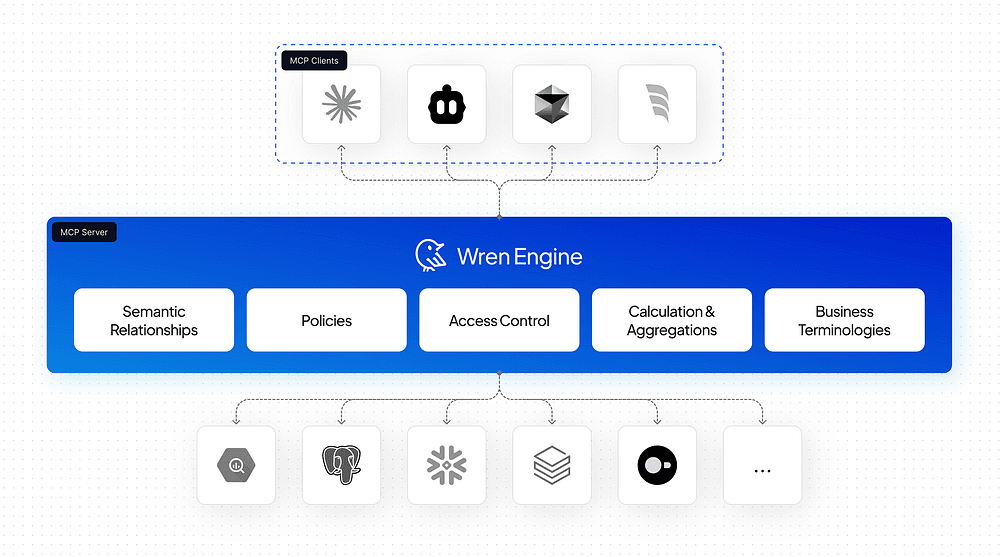

2. MCP (Model Context Protocol): The Universal Adapter

This is the game-changer. We are betting big on the Model Context Protocol (MCP).

In the past, every BI tool was a walled garden. If you wanted to use AI on your data, you had to use their AI inside their interface.

MCP changes the industry's physics. It is a standard that allows an AI Client (such as Claude Desktop or a custom agent) to connect to a Data Server (Wren AI) and “download” the context it needs.

Think of Wren AI as the MCP Server.

- We understand the semantics of your data.

- We hold the connections to your distributed databases.

- We hold the security rules.

When an AI Agent (the MCP Client) connects to Wren AI, it says: “I have a user asking about Q3 sales. Give me the tools and context to answer this.” Wren AI responds: “Here are the relevant schemas, here is the definition of ‘Sales’, and here are the authorized SQL templates you can use.”

The Agent then uses that context to generate perfect, accurate SQL, which Wren AI executes against the source databases.

The Vision: From Infrastructure to Intelligence

What does this mean for the future of your company?

It means the end of the “Data Engineering Bottleneck.”

Today, if you are an executive, you are likely frustrated by how long it takes to get answers. You ask a question, and your data team says, “We need two weeks to ingest that data, model it, and build a dashboard.” That latency is the cost of the manual loom.

In the future we are building, the latency collapses.

- The Data Fabric is instant: You spin up a new database, and the Agents map it.

- The Data Mesh is fluid: Business units own their data simply by describing it in natural language, which Wren AI converts to MDL.

- Access is universal: You can ask questions via Slack, a dashboard, or a voice agent, and because they all connect to the Wren Semantic Engine via MCP, they all get the same truth.

The entrepreneurs who win in the next decade won’t be the ones with the biggest data lakes. They will be the ones with the most intelligent mesh.

They will realize that Semantics is the new Infrastructure.

Suppose you control the semantics, the definitions, the logic, the meaning, you control the business. The AI agents are just the workforce waiting for your instructions.

At Wren AI, we are building the loom, the pattern, and the protocol. We are ready to help you weave the future.

Get Started Today

Ready to experience enterprise GenBI?

🚀 Try Wren AI: Visit getwren.ai for a free trial. Connect your databases in minutes and start asking questions in plain English.

🔗 Star Wren AI on GitHub: Join 1.6k+ developers building the future of conversational business intelligence.

💬 Join the Community: Connect with data teams already scaling their analytics with Wren AI’s semantic-driven approach.

Remember: The future of BI is conversational. The question isn’t whether AI will transform how we work with data — it’s whether you’ll lead that transformation or follow it.

Don’t wait for perfect data. Start where you are, learn as you go, and let Wren AI handle the complexity of turning questions into insights.

Supercharge Your

Data with AI Today

Join thousands of data teams already using Wren AI to make data-driven decisions faster and more efficiently.

Start Free TrialRelated Posts

Related Posts

AI-Powered Business Intelligence: The Complete Guide to GenBI

Discover how Generative Business Intelligence (GenBI) powered by Wren AI is transforming data access with conversational AI, real-time insights, and intuitive decision-making tools for modern enterprises

Beyond Text-to-SQL: Why Feedback Loops and Memory Layers Are the Future of GenBI

How Wren AI’s Innovative Approach to Question-SQL Pairs and Contextual Instructions Delivers 10x More Accurate Generative Business Intelligence

The End of the Great Centralization: Why the Future of Enterprise Data is Distributed

How GenBI and AI Agents are replacing the costly “Single Source of Truth” model with a decentralized, pipeline-free SQL architecture.